Editor’s note: Vadim discusses a fraud detection AI agent blueprint by ScienceSoft, first presented at the Insurance Transformation Summit 2025 in Boston. He shows how multi-agent orchestration with explicit guardrails keeps agentic investigation workflows safe, controllable, and compliant. He also addresses practical questions raised by U.S. insurance leaders at the event. If you want to discuss feasible agent launch paths for your organisation, Vadim and other AI engineering consultants at ScienceSoft are ready to assist. [1]

Agentic AI has moved from proof‑of‑concept to pilot programmes across the insurance industry, delivering measurable gains in accuracy and investigative throughput. According to the original report, however, adoption is tempered by a paradox: while more than 80% of insurers are exploring or piloting agentic workflows, only around 4% say they fully trust those workflows in production. That gap frames the central engineering challenge: how to scale multi‑agent investigations without surrendering control, traceability or regulatory compliance. [1]

ScienceSoft’s blueprint, introduced at the summit, recommends separating duties among narrow, specialised agents so each component has tightly scoped tasks and minimal data access. The company says this reduces blast radius by issuing short‑lived credentials and attribute‑based access controls by insurance line, data type and regulatory class; automated prompt redaction ensures agents only see the data they require. Industry vendors similarly promote task‑focused agents: Glide highlights anomaly‑detection agents to surface irregular claims, and Supervity offers agents that automate intake, validation and adjudication to reduce human workload. [1][2][3]

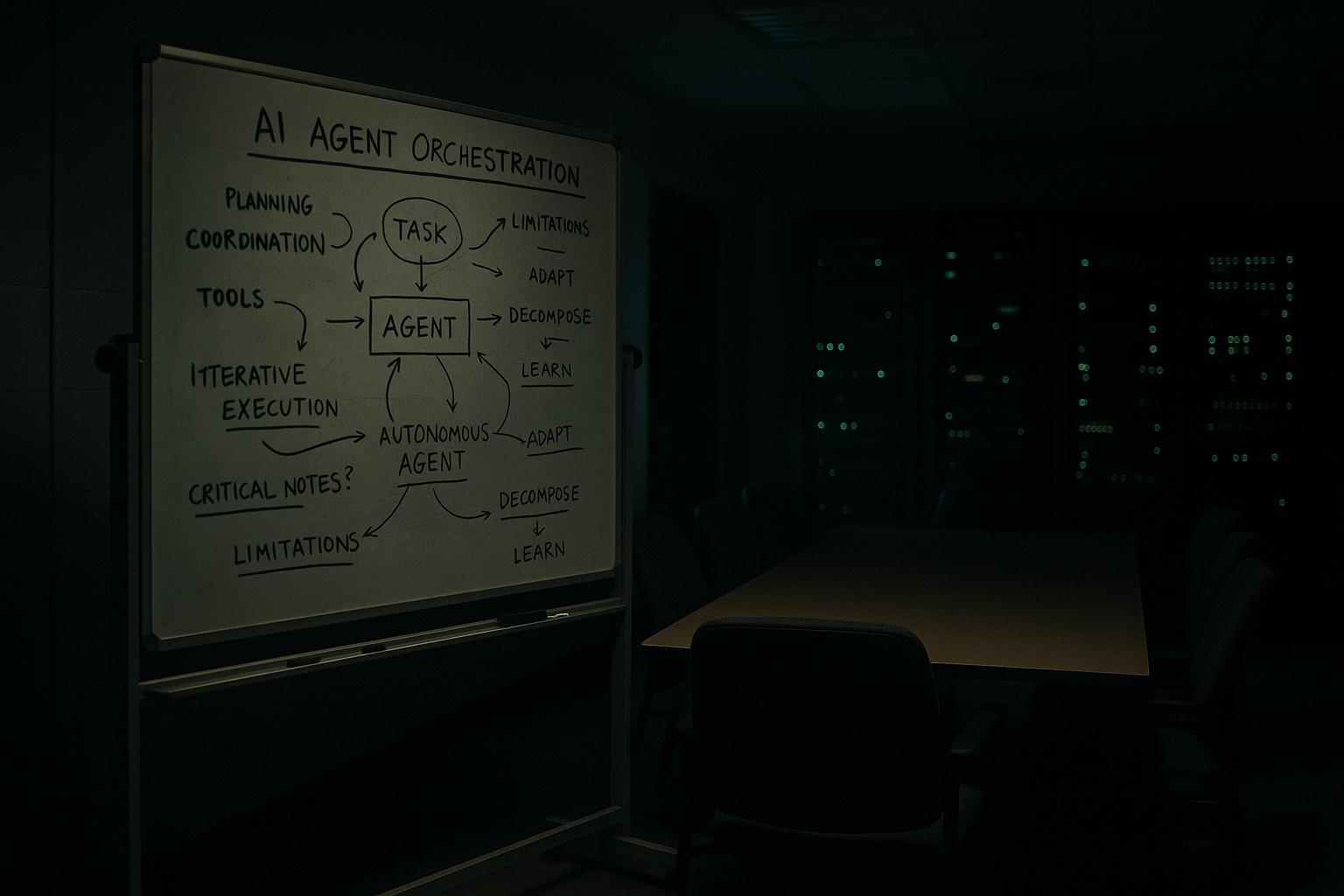

A second keystone is orchestration and rule enforcement. ScienceSoft describes the orchestrator as the engine that decomposes an investigation, assigns steps to the right agents and enforces rule‑based guardrails and human approval triggers. The company recommends managed cloud agent frameworks such as Amazon Bedrock AgentCore and Azure AI Foundry, or enterprise low‑code platforms for insurers already invested in tools like Power Automate or Camunda. Vendors in the market echo this approach: Salesforce and others emphasise orchestration plus observability as central to reliable agent deployments. [1][7]

Trust boundaries and an "AI firewall" are positioned as indispensable operational controls. The firewall validates inputs, constrains outputs, enforces schema restrictions, blocks unauthorized tool calls and detects prompt injection attempts. ScienceSoft recommends augmenting cloud provider guardrails with custom prompt validators and strict JSON schemas to ensure agents cannot, for example, invoke payment APIs or exfiltrate PII. Comparable vendor offerings in the sector, Kirha’s real‑time claim challenges, RhinoAgents’ configurable fraud automation and Atna’s focus on entity verification, demonstrate parallel patterns of blocking misuse and preserving investigative integrity. [1][4][5][6]

Explainability and tamper‑evident traceability form the compliance backbone. The report states engineers should retain full histories of prompts, agent configurations and operating rules, logging every action into immutable stores so insurers can reconstruct decisions for regulators or claimants. ScienceSoft points to S3 Object Lock, CloudWatch, Langfuse and OpenTelemetry as examples of tools to capture traces, and to model‑explainability services such as Amazon SageMaker Clarify and observability suites like LangSmith to surface the model‑level rationale and RAG sources the agents relied on. Market suppliers also integrate RAG, vector indexes and graph databases to ground agent reasoning in auditable source content. [1][5][3]

Operational oversight and observability are critical to detect drift and misuse once agents are live. ScienceSoft recommends monitoring unusual policy read volumes, anomalous tool‑call sequences and spikes in evidence retrieval, and integrating agent telemetry with SIEM systems so security and engineering can respond rapidly. Industry solutions from Glide, Supervity and others emphasise real‑time anomaly detection and dashboards that feed security and business workflows, enabling shadow‑mode comparisons and phased rollouts to mitigate business disruption. [1][2][3]

Data security and residency controls are treated as non‑negotiable. ScienceSoft advocates encryption in transit and at rest, field‑level protections for regulated data, private endpoints for model inference, tokenisation of identifiers and data residency enforcement so cases remain in the correct legal geography. The company also recommends embedding threat detection, vulnerability scanning and data‑security intelligence into the agentic stack. This mirrors broader market practice where vendors pair fraud detection agents with OCR, digital‑footprinting and provider profiling to reduce payouts while preserving evidentiary custody. [1][6][5]

For insurers seeking a pragmatic launch path, the blueprint stresses managed services and connectors to accelerate time‑to‑value. ScienceSoft advises using frameworks like LangGraph or LangChain and cloud provider agent toolchains to minimise bespoke engineering, then integrating via event‑driven patterns , webhooks, EventBridge or Kafka , so agentic workflows have clean entry and exit points with existing claims platforms. The recommended rollout pattern is shadow mode followed by blue‑green and phased activation by line of business; parallel vendor offerings highlight similar integration strategies and fast‑start templates to reduce implementation cost and risk. [1][2][3]

Data preparation and evidence custody complete the operational checklist. ScienceSoft recommends normalising identifiers, tokenising contact data, applying automated data quality checks, storing evidence in immutable object stores, and building vector and graph retrieval pipelines for RAG. These steps make agentic reasoning reproducible and reusable across other LLM applications. Vendor materials across the sector reinforce that clean identifiers, reliable feature stores and traceable embeddings are prerequisites for consistent fraud detection performance. [1][3][2]

The cumulative message is that agentic fraud detection’s promise is real but conditional: accuracy and efficiency gains are attainable only when narrow agents, robust orchestration, AI firewalls, tamper‑evident traceability, active observability and strict data controls are implemented together. According to the original report, when these safeguards are aligned, special‑investigations units get defensible leads, adjusters receive auditable recommendations, security teams reduce exposure, and engineers obtain governance facts that stand up to regulators. ScienceSoft concludes by offering tailored launch planning for insurers that want to move from pilots to governed production. [1]

📌 Reference Map:

##Reference Map:

- [1] (ScienceSoft) - Paragraph 1, Paragraph 2, Paragraph 3, Paragraph 4, Paragraph 5, Paragraph 6, Paragraph 7, Paragraph 8, Paragraph 9, Paragraph 10

- [2] (Glide) - Paragraph 3, Paragraph 7, Paragraph 9

- [3] (Supervity) - Paragraph 3, Paragraph 7, Paragraph 9

- [4] (Kirha) - Paragraph 5

- [5] (RhinoAgents) - Paragraph 5, Paragraph 6

- [6] (Atna) - Paragraph 5, Paragraph 8

- [7] (Salesforce) - Paragraph 4

Source: Noah Wire Services