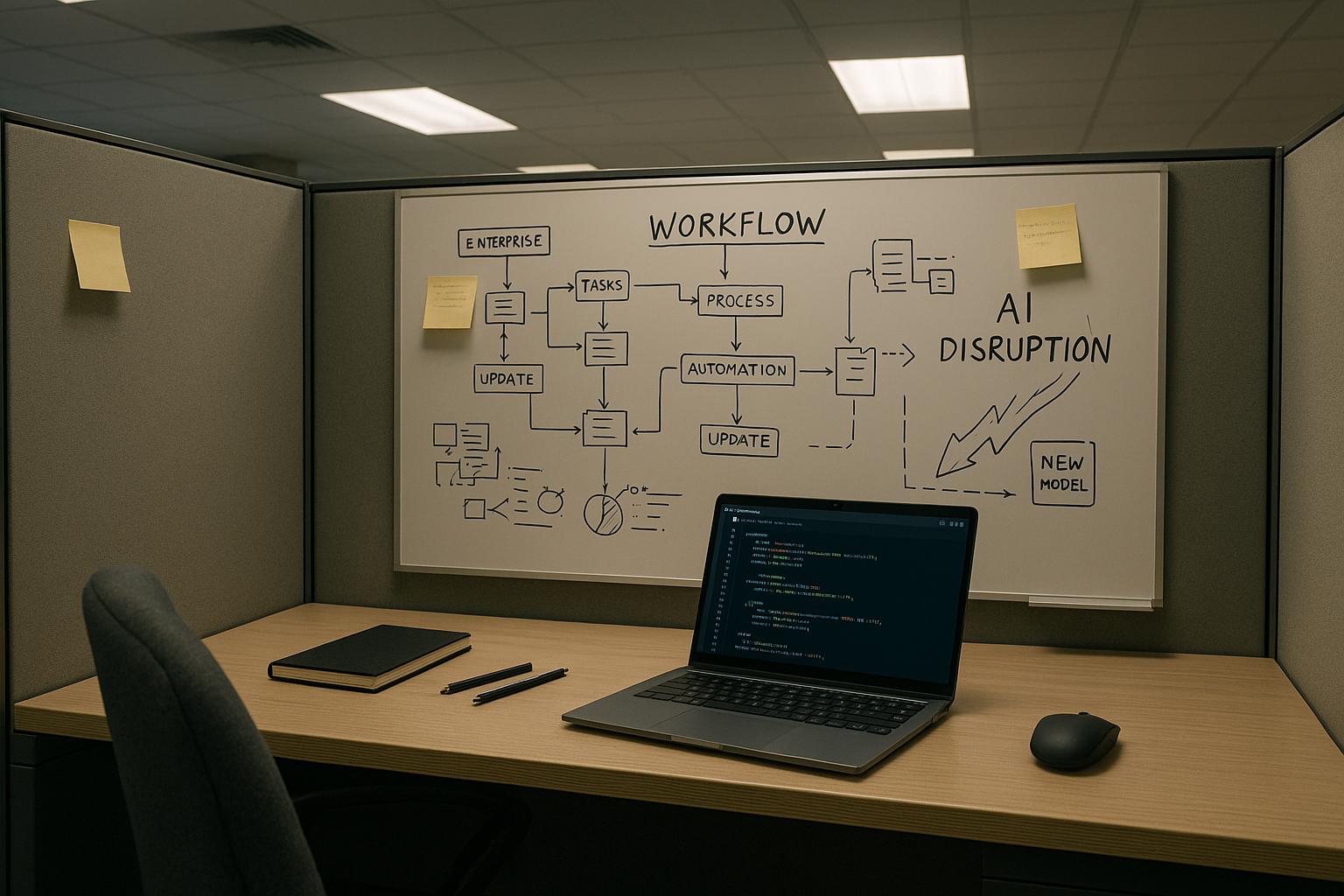

Every technology has a moment; emerging AI technologies, I argue, are now in theirs because they are moving from experiment to embedded capability that quietly removes friction across businesses. The shift since the COVID-19 era has taken AI out of labs and into everyday workflows, from automation tools such as n8n to developer aids like Copilot and design assistants like Figma Make, and what matters now is practical impact rather than hype. [1][4][7]

Agentic AI and autonomous agents are central to that transition because they change AI from a reactive assistant into an entity that can take sustained action across systems. Industry commentators and enterprise forecasts point to agents evolving from simple chatbots into multi-step, service-interfacing systems that orchestrate workflows, manage handoffs and reduce human bottlenecks. According to Forbes, these agentic systems are already being positioned as a top enterprise trend for 2026. [1][2][5]

In practice, autonomous agents are proving useful in three clear operational areas. First, workflow orchestration: agents can trigger actions, manage approvals and resolve routine blockers so teams avoid coordination slowdowns. Second, internal decision support: agents that pull from multiple datasets can flag risks, surface patterns and suggest next steps without replacing human judgement. Third, customer operations: agents that track issues across systems and predict problems ahead of time turn reactive support into proactive retention work. These functions mirror both real-world adoption and analyst predictions about agent-driven automation. [1][2][3]

Multimodal AI, models that combine text, images, audio and sensor inputs, follows the same logic of reducing friction by meeting business needs in context. Where text-only systems helped people get comfortable with AI, multimodal systems let AI interpret sketches, screenshots, voice and telemetry together, improving product design cycles, quality inspection and support resolution. Industry writing and vendor roadmaps indicate that multimodal capabilities will be a key way organisations capture richer context and reduce error rates. [1][3][7]

AI-powered automation platforms are extending that capability into everyday operational work by embedding intent understanding and exception handling into workflow engines. Tools that marry models with orchestration engines are already being used for contextual lead routing, invoice processing with anomaly detection, adaptive approval flows and dynamic onboarding. Market analysis and practitioner reports show that such platforms can automate processes previously considered too complex to trust to software alone. [1][4][7]

At the same time, vertical or industry-specific AI models are gaining traction because generalist models often require heavy correction to be useful in regulated, domain-dense environments. Healthcare, manufacturing, retail and finance are all cited as areas where domain-tuned models deliver measurable accuracy and trust improvements, reducing false positives in fraud detection, improving predictive maintenance and supporting clinically relevant documentation. Analyst forecasts emphasise that verticalisation of models will be a major vector of value by 2026. [1][3][6]

Software development and product teams are already seeing concrete gains from AI assistance, but those gains come with caveats. Productivity tools like Copilot and design accelerants like Figma Make speed feature delivery and reduce repetitive work, lowering cost and improving collaboration. Yet leaders must guard against overreliance: AI-generated outputs are not always production-ready and can introduce technical debt or security blind spots if not reviewed. That balance, using AI as collaborator while retaining human ownership of critical decisions, is highlighted across practitioner accounts. [1][4]

Responsible AI and governance are no longer optional. As AI moves into hiring, pricing, approvals and customer interactions, businesses face legal, reputational and operational risk if systems are ungoverned. Notably, the US President signed an Executive Order on 11 December 2025 to protect American AI innovation from a patchwork of state rules, underscoring how rapidly policy is adapting. Organisations and platform vendors are responding with built‑in governance features, role-based controls, audit logs, human-in-the-loop workflows and model monitoring, that aim to make emerging AI technologies auditable and controllable. [1][5]

Successful adoption requires discipline: clear outcomes, data readiness and a pilot-first approach. Common failures stem from chasing tools instead of business problems, underestimating internal readiness and treating AI adoption as a switch rather than a capability to be grown. Industry analysts and corporate guides recommend prioritising clean accessible data, upskilling existing teams, and running focused pilots that measure value within months rather than years. Companies that adopt more deliberately, the evidence suggests, will win not by being the fastest to adopt AI but by being the most disciplined. [1][2][3][7]

##Reference Map:

- [1] (DigitalIsSimple blog) - Paragraph 1, Paragraph 2, Paragraph 3, Paragraph 4, Paragraph 5, Paragraph 6, Paragraph 7, Paragraph 8, Paragraph 9

- [2] (Forbes) - Paragraph 2, Paragraph 9

- [3] (IBM) - Paragraph 3, Paragraph 4, Paragraph 6, Paragraph 9

- [4] (Amity Solutions) - Paragraph 1, Paragraph 5, Paragraph 7

- [5] (Deloitte) - Paragraph 2, Paragraph 8

- [6] (Vistage) - Paragraph 6

- [7] (Hiteshi) - Paragraph 4, Paragraph 5, Paragraph 9

Source: Noah Wire Services