LLM-aware internal linking has emerged as a core architectural practice for sites that expect to be discovered and cited by AI-driven search, conversational assistants and retrieval-augmented systems. According to Single Grain, links are no longer only vehicles for PageRank and human navigation but semantic signals that help large language models reconstruct a site’s meaning from fragments such as headings, paragraphs and anchor text. When those signals are noisy or generic, models struggle to retrieve the right chunks, producing weaker summaries, missed citations and diminished visibility in AI Overviews. [1][2]

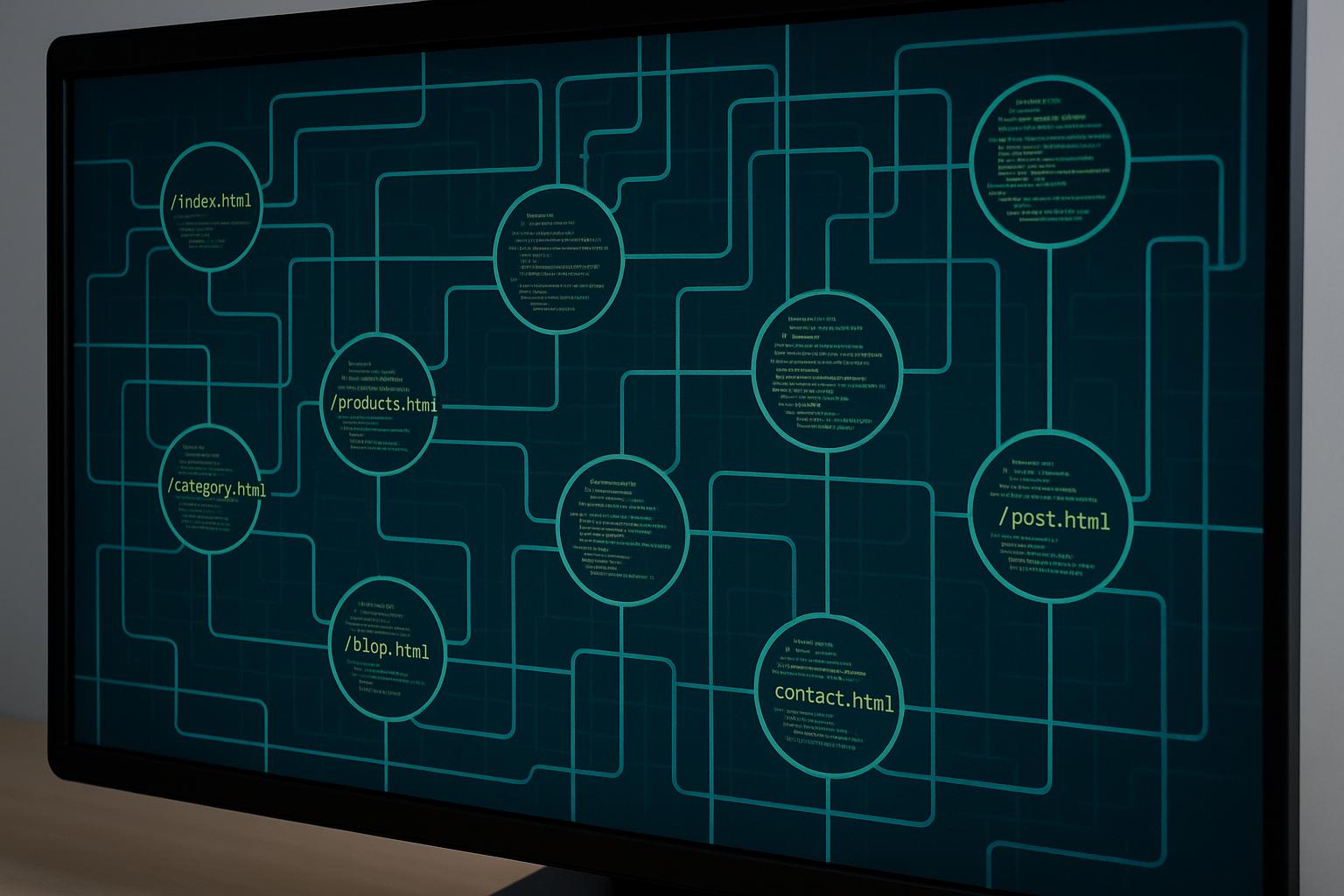

This shift changes the unit of optimisation from isolated pages to an interconnected knowledge graph. Industry commentary and practical guides argue that anchor text, placement and cross-link patterns collectively encode relationships, cause and effect, prerequisite and outcome, problem and solution, that an LLM can use to infer “who does what for whom, under which conditions.” Single Grain recommends treating anchors as explicit relationship statements (for example, "onboarding’s impact on churn risk") rather than generic prompts like "learn more". [1][2]

How AI crawlers and retrieval pipelines interpret links explains why this matters. Most retrieval stacks crawl pages, segment content into chunks, compute vector embeddings, and then retrieve those vectors in response to queries. Embedded relationships and nearby contextual anchors become metadata that enriches embeddings and raises confidence about how chunks relate. Growth Marshal and Embedded Retrieval Optimization resources similarly stress aligning content structure to embedding models so that semantic retrieval surfaces the most relevant passages. [1][4][6]

Practical auditing begins with a content inventory and entity classification: tag URLs by entity type (product, feature, industry, problem, solution), map links by purpose (navigational, structural, contextual), and evaluate anchors for relationship richness rather than keyword-only phrasing. Single Grain outlines prioritising fixes where LLM exposure affects revenue, educational-to-conversion flows, docs-to-upgrade paths, and pairing link fixes with structured page summaries to improve AI snippets. AIrops and RankTracker emphasise the role of AI tooling in surfacing orphaned pages and optimising link distribution at scale. [1][3][5]

Different site archetypes demand tailored blueprints. For B2B SaaS, marketing pages should funnel into docs with anchors indicating persona and lifecycle stage ("implementation guide for admins"); docs should link back to case studies and upgrade paths. E‑commerce hubs should use facet-rich anchors ("waterproof hiking boots for winter conditions") to support AI-driven product finders. Publishers must tune related-article modules to add complementary angles rather than duplicates. Support centres and API docs require extreme precision so internal RAG assistants can reliably map errors to fixes. Single Grain’s framework and the sector analyses reflect these template-level distinctions. [1][2]

Scale requires automation with strong guardrails. AI-generated link suggestions can be constrained to candidate sets that share primary entities or topic clusters and filtered by rules such as maximum contextual links per word count and anchor composition requirements. Growth Marshal’s and Single Grain’s guidance recommends programmatic linking from taxonomies where appropriate, combined with dashboards tracking link depth, density and relationship-rich anchor proportions to avoid crawl traps and topical dilution. [1][4][6]

Adopting an LLM-first linking strategy also aligns with emerging differences between how LLMs and traditional search engines index content. RankTracker notes that LLM-oriented systems build semantic summaries, prioritise trusted sources and sometimes fetch fewer pages, so strong topical clustering and machine-readable structure increase the chance content will be selected and excerpted. Embedded Retrieval Optimization adds that prompt-surface and chunk-level design influence which passages are retrieved and why. Together, these perspectives imply that internal links should be engineered both for discovery and for the semantic clarity that embeddings require. [5][4]

Turning internal linking into a competitive advantage demands governance: encode topics, entities and relationships as first-class citizens in sitemaps and templates; automate where it scales; and retain human review on high-impact pages. Single Grain frames this as a move from incremental SEO tweaks to an AI-first architecture that connects site structure, retrieval performance and business metrics. Practitioners should measure retrieval quality, answer coverage and citation rates alongside traditional ranking metrics to assess success in generative and RAG-driven contexts. [1][2][3]

📌 Reference Map:

##Reference Map:

- [1] (Single Grain) - Paragraph 1, Paragraph 2, Paragraph 4, Paragraph 5, Paragraph 6, Paragraph 8

- [2] (Single Grain summary) - Paragraph 1, Paragraph 2, Paragraph 5, Paragraph 8

- [3] (AiRops) - Paragraph 4, Paragraph 8

- [4] (Growth Marshal) - Paragraph 3, Paragraph 6, Paragraph 7

- [5] (RankTracker) - Paragraph 7

- [6] (Growth Marshal duplicate) - Paragraph 3, Paragraph 6

Source: Noah Wire Services